Artificial intelligence has quickly become part of everyday life. Millions of people now use tools like ChatGPT to write emails, summarize articles, brainstorm ideas, translate languages, research products, and answer daily questions. AI can save time, increase productivity, and help us think faster. In many ways, it has become one of the most useful technologies of our generation.

But convenience can also create overconfidence.

The more helpful AI becomes, the more tempting it is to ask it for everything. That is where caution matters. AI is powerful, but it is not wise in the human sense. It does not carry moral responsibility. It does not hold a professional license. It does not understand grief, faith, law, illness, or human consequences the way a trusted expert, family member, counselor, physician, attorney, or religious leader does.

As AI becomes more embedded in modern life, one of the most important skills is not just knowing how to use it, but also knowing when not to use it.

Here are seven important areas where AI should never be your final authority.

1. Do Not Use AI as a Religious Authority in Sensitive Matters

Faith is deeply personal, but it is also deeply communal and historical. Religious texts carry centuries of scholarship, interpretation, tradition, and sensitivity. AI can summarize passages or explain general background, but it should never replace a qualified religious leader when it comes to interpretation, doctrine, or public guidance.

This is especially important in countries or communities where religion is central to law, identity, and public life. Asking AI to produce a quote from a sacred text to support your personal argument can lead to misunderstanding, offense, or serious consequences if the interpretation is inaccurate or disrespectful.

For example, someone might ask AI to provide a verse from the Quran to support a political opinion or personal dispute. That may sound simple, but sacred texts are not meant to be treated like random quotations pulled out of context. A verse without proper understanding can distort meaning and create harm.

The same principle applies to other religions as well. Whether it is the Quran, Bible, Torah, Bhagavad Gita, or other sacred texts, interpretation should be handled with humility and care.

Better use of AI:

Use AI for general educational background, historical summaries, or language assistance. For interpretation, doctrine, or guidance, consult a trusted Imam, priest, rabbi, monk, pastor, or qualified religious scholar.

2. Do Not Give AI Your Passwords, Banking Access, or Sensitive Personal Credentials

In the age of digital convenience, security is more important than ever. One of the clearest lines you should never cross is sharing passwords, banking information, account recovery details, or other sensitive login credentials with AI.

Some people make the mistake of asking AI to generate and remember passwords for them, or worse, they paste real account details into a chatbot while asking for help organizing their digital life. That is not a safe habit.

Your bank account, email inbox, tax records, cloud storage, and private documents are too important to expose casually. Even beyond technical security, the habit itself is reckless. Sensitive information should be stored only in tools specifically designed for secure credential management.

For example:

- Asking AI to save a password for your online bank account

- Pasting your email login into a chat window and asking for troubleshooting help

- Sharing your Social Security number, debit card number, or tax ID for convenience

These are all serious mistakes.

Better use of AI:

Ask AI to explain what makes a strong password, or how to improve online security habits. Then use a trusted password manager and enable multi-factor authentication on important accounts.

3. Do Not Rely on AI for Medical Diagnosis

AI can be useful for general wellness education. It can explain the difference between vitamins, summarize common symptoms, suggest questions to ask your doctor, or help you understand a diagnosis after you have already received one from a licensed professional.

But AI should never be the one diagnosing you.

A chatbot cannot examine your body, measure your blood pressure, review your lab results in full clinical context, order imaging, or detect the subtle warning signs that an experienced physician would catch. Health is too important for guesswork.

This becomes even more dangerous because medical symptoms often overlap. A headache could be stress, dehydration, high blood pressure, or something far more serious. Chest pain could be heartburn, anxiety, or a medical emergency. AI may offer possibilities, but it should not be trusted to determine what is actually happening.

For example:

- Asking AI whether a lump is cancer

- Asking AI whether you can skip going to the emergency room

- Asking AI to prescribe treatment based only on your written symptoms

- Using AI alone to decide whether your child’s fever is dangerous

These are not safe uses.

Better use of AI:

Use it to prepare for an appointment, keep a symptom journal, or translate medical terms into plain language. For diagnosis, treatment, prescriptions, or emergencies, go to a licensed healthcare professional.

4. Do Not Use AI to Create Deepfake Adult Content or Exploit Real People

One of the darkest abuses of modern AI is the creation of fake explicit content using real people’s faces, voices, or likenesses. This is not creativity. It is exploitation.

Using AI to place a real person into sexualized photographs, adult videos, or other explicit material is deeply harmful. It violates consent, damages reputations, can destroy careers and relationships, and in many places can lead to serious legal consequences. When minors are involved, even in synthetic or manipulated form, it crosses an even more severe line and may be criminal.

This issue has become more urgent as generative tools become more accessible. What once required advanced technical skill can now be attempted with consumer-level tools, making the ethical responsibility even greater.

Examples of misuse include:

- Putting a celebrity’s face into explicit material

- Altering a photo of someone you know into sexualized content

- Generating fake revenge material against an ex-partner

- Using the image of an underage person in any adult context

None of this is acceptable.

Better use of AI:

Use image and video tools for design, education, art, animation, branding, or entertainment that respects consent and human dignity. Real people are not raw material for humiliation.

5. Do Not Hand Over Your Most Serious Personal Decisions to AI

AI can help you organize your thoughts, but it should never become the decision-maker for the most important choices in your life.

Human decisions involving marriage, divorce, wills, inheritance, family conflict, career changes, end-of-life planning, or major financial commitments are not math problems. They involve values, relationships, memory, responsibility, fairness, emotion, and long-term consequences. AI can generate pros and cons, but it does not know your heart, your history, or the hidden cost of a bad decision.

For example:

- Asking AI whether to divorce your spouse

- Asking AI which child deserves a larger inheritance

- Asking AI whether to disinherit a family member

- Asking AI whether to abandon your current career overnight

- Asking AI how to divide your estate without consulting an attorney

These are moments that deserve thoughtful human counsel.

This does not mean AI is useless here. It can help draft questions for a lawyer, outline issues to discuss with a counselor, or help you compare options in a structured way. But it should never replace legal advice, emotional maturity, or personal responsibility.

Better use of AI:

Use it as a brainstorming assistant, not a moral judge. For major life choices, speak to professionals and the people directly affected.

6. Do Not Use AI to Plan Illegal, Violent, or Harmful Acts

This should be obvious, but it must still be said clearly: AI should never be used to help commit crimes, evade law enforcement, build weapons, or cause harm.

The misuse of AI for illegal activity is one of the greatest concerns of the modern era. People may be tempted to use it for shortcuts in tax evasion, fraud, hacking, violence, or criminal planning. But technology does not make wrongdoing less wrong. It only makes the consequences wider.

Examples include:

- Asking how to build a bomb

- Asking how to assemble weapons for violent use

- Asking how to avoid taxes illegally

- Asking how to rob a bank or escape a warrant

- Asking how to break out of jail

- Asking how to harm elected officials or public figures

These are not gray areas. They are direct abuses of technology.

AI should be used to solve problems, not create victims.

Better use of AI:

Use it to learn the law, understand compliance, improve safety, or find lawful ways to protect yourself and your property. If a goal requires secrecy, violence, or deception, AI should not be part of it.

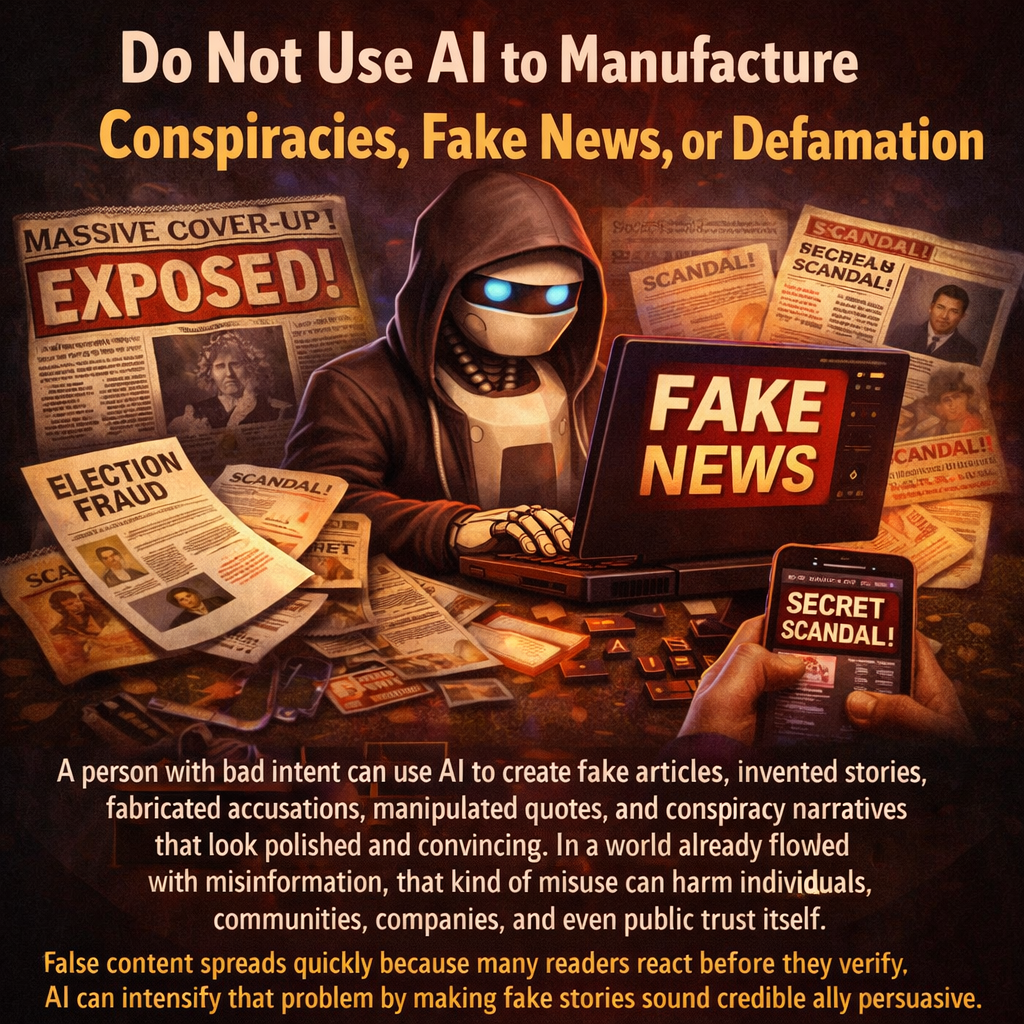

7. Do Not Use AI to Manufacture Conspiracies, Fake News, or Defamation

AI can write fast. That is exactly why it can be dangerous in the wrong hands.

A person with bad intent can use AI to create fake articles, invented stories, fabricated accusations, manipulated quotes, and conspiracy narratives that look polished and convincing. In a world already flooded with misinformation, that kind of misuse can harm individuals, communities, companies, and even public trust itself.

False content spreads quickly because many readers react before they verify. AI can intensify that problem by making fake stories sound credible and emotionally persuasive.

Examples include:

- Inventing a scandal about a real person

- Creating false accusations against a business rival

- Writing fake political rumors and presenting them as fact

- Building fear-based doomsday narratives to cause panic

- Generating fabricated screenshots, quotes, or “news reports”

This type of behavior is not harmless entertainment. It can destroy reputations, mislead the public, and create chaos.

Better use of AI:

Use it to improve clarity, summarize verified reporting, compare sources, or strengthen truthful communication. Truth still matters, and AI should never become a factory for lies.

Final Thoughts: AI Is Powerful, but It Is Not a Substitute for Wisdom

AI is one of the most remarkable tools of our time. It can help us move faster, write better, and think more broadly. It can support education, business, creativity, and daily productivity in ways that were hard to imagine only a few years ago.

But power without boundaries leads to misuse.

The smartest people in the AI era will not be the ones who ask AI everything. They will be the ones who understand where human judgment must remain in charge.

Use AI to brainstorm.

Use AI to learn.

Use AI to draft.

Use AI to organize.

But do not use AI as your doctor, your cleric, your lawyer, your conscience, or your weapon.

Technology should serve humanity, not replace responsibility.

At Stefanus.AI, I believe the future of AI is not just about what machines can do. It is about what humans should do with wisdom, restraint, and integrity.

Because in the end, the most important intelligence is still human judgment.